Today's edition is a little less charty, and a bit more opinionated, than usual. The AI hype is real, and I find myself caught up in it, too. I think we could all use a reminder that the future hasn't been decided yet.

💙 Amanda

Speaking of uncertain futures: You can make mine a little more secure by becoming a paying member of Not-Ship.

These headlines look precise: MIT study finds AI can already replace 11.7% of U.S. workforce; AI will affect almost 40% of jobs, IMF says; AI and automation will take 6% of US jobs by 2030.

They imply that someone, somewhere, has measured something real about our future careers.

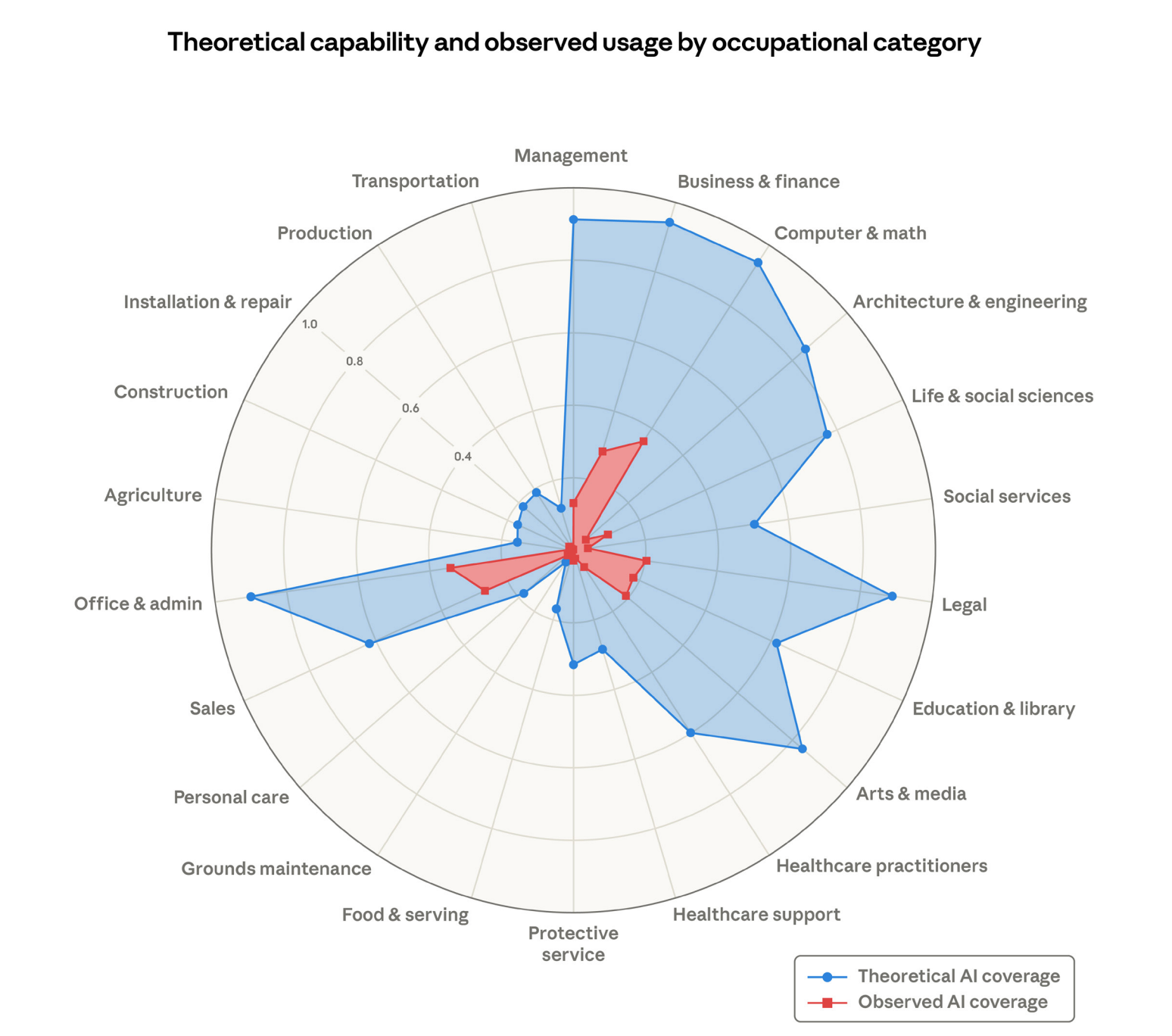

So does this recent, viral chart from Anthropic.

In actuality, these numbers are all guesses.

When AI companies, forecasters, and talking heads say your job will disappear — they're just playing baseball in the dark. They may be professionals, but they can't see any further than the rest of us.

Unfortunately, their speculation often seems more substantive than just a hunch.

That's because charts and statistical language are symbols of authority. Just like a lab coat, clipboard, or badge, they suggest expertise and can be powerful persuaders.

There's very little evidence that these guesses deserve that authority.

Extreme assumptions and crystal balls

Here's how most AI job exposure studies work: Analysts — often at consultancies, research firms or within AI companies themselves — take a job, break it into tasks and ask whether AI could do each of those tasks. It sounds systematic, but every step involves a judgement call, not measured data.

There's no objective answer to questions like: What counts as a distinct task? or What does "AI can do this" mean? Each researcher has to make their own decision, and that's why you'll find predictions of future AI automation ranging from 5% to 47% of all jobs.

But there's a bigger problem than methodology: There's no historical precedent to anchor these predictions.

Predicting the impact of AI — whether on your career, or the existence of humanity — isn't like forecasting the weather. Tomorrow's chance of rain is grounded in physics and centuries of historical data. With AI, though, we have no baseline or past experience to build from.

To make accurate forecasts, we'd need to know things like: How fast AI will improve, how businesses and workers will respond, and what new jobs may emerge. These are guesses upon guesses upon guesses.

You might suppose that guesses from experts are more valuable than guesses from you or me. Yet, research continually shows that their educated hunches aren't particularly good either.

Even a paper surveying thousands of AI authors about the technology's future felt obliged to admit it.

"Forecasting is difficult in general, and subject-matter experts have been observed to perform poorly," notes the paper's lengthy caveats. "There are signs in this research and past surveys that these experts are not accurate forecasters across the range of questions we ask."

The performance of expertise

Nevertheless, these shots in the dark scare us. Charts don't just communicate information, they perform expertise and authority. And that's a problem when translating future speculation into numbers. Adding a percent sign or graphing these guesses gives them truthiness.

"Displaying scientific-looking elements such as brain scans, scientific jargon, chemical formulas, and even something as simple as graphs, can imbue evidence for a claim with a scientific halo that renders information more convincing," explains Aner Tal at Cornell University.

This halo effect is exactly what's happening with that viral Anthropic chart. It looks like rigorous measurement, but it's mostly just statistical theatre.

The irony is that fuzzier language would actually be more honest. In these cases, phrases such as "likely" or "maybe" can express uncertainty better than percentages and charts, simply because they don't imply a precision that doesn't exist.

Unfortunately, you're unlikely to see headlines like this anytime soon: "AI may affect a portion of white-collar work, though we're genuinely uncertain about the scale and timing." But that would be more accurate.

To be clear, I'm not saying your job is safe. AI is already reshaping how work gets done. I'm just saying nobody really knows what will happen — and so far, the numbers haven't matched the alarm.

Most studies of the actual impact AI has had on the labour market haven't found widespread job loss at all, though there is some evidence early-career workers in the most AI-exposed occupations have experienced a relative decline.

Which brings us back to that viral Anthropic chart.

It spawned headlines like A 'Great Recession for white-collar workers' is absolutely possible. And The jobs that AI could most certainly replace.

Yet the report it came from simply concluded: "We find no systematic increase in unemployment for highly exposed workers."

CAN'T GET ENOUGH?

I like to keep this newsletter at a reasonable length. This week, that meant cutting more detail than I like. Here's a bit of good follow-up reading:

Measuring AI job exposure: Academics Thomas Davenport and Miguel Parades wrote a detailed, and very readable, explainer about the pitfalls of predicting AI job loss.

"While it is appealing to predict the future of employment with regard to AI, it seems inevitable that such predictions are likely to be incorrect and misleading. There are simply too many unknown variables and unclear relationship between them to do a rigorous, quantitative analysis." (Davenport and Parades)

The authority of charts: In her entertaining piece "Looks like science, must be true!" Cornell researcher Aner Tal explains why the halo of science is so persuasive.

MORE SHADE

Last week I wrote about how shade is consistently a privilege of the wealth. One of the authors of that research, Lukas Beuster, reached out to put an even finer point on the findings.

"One small thing worth adding, since it also got a bit lost in the MIT news coverage: We specifically looked at shade along the routes people actually walk, sidewalks, footpaths, and squares. It's about the ability to move through the city comfortably, not just whether shade exists in private gardens."

Thanks for the extra detail, Lukas!

FROM ELSEWHERE

Here's what I found interesting, important or delightful this week:

The mathematician fighting drug cartels. Causing controversy along the way, Rafael Prieto-Curiel's models have attempted to quantify the size of Mexico's organized crime networks.

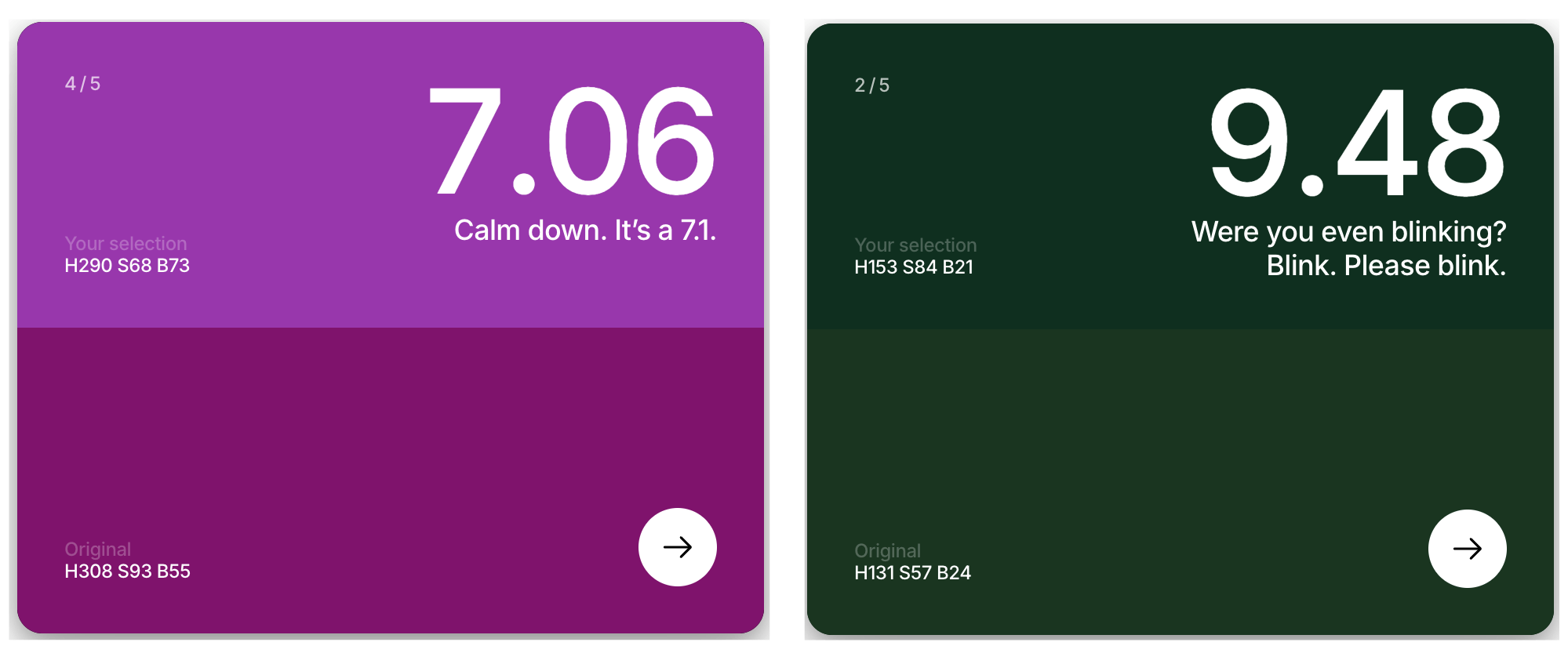

Here's a colour. Recreate it. This simple colour game is addictive and frustrating. Definitely worth wasting some time on.

The hidden price of digital life. Watch as the Anything Counter tallies up what happened online today: The number of selfies taken, YouTube hours uploaded, fake reviews posted and accidents due to digital distractions.

MORE NOT-SHIP

Member discussion